Google has just added a new feature to their Fetch as Google Tool called “Fetch and Render”. I’m not gonna go into details about the benefits of using this tool, but its worth mentioning the general idea: index your content faster whether new or old. Most webmasters will ensure that any new content is included in their sitemap, after that resubmit it to the search engines and after that just sit and wait. It may take days….it may take months. On the other hand submitting your link to Fetch as Google Tool is like pressing the magic SEO button. Google says that they will crawl the URL within a day, however I have seen pages showing up in the SERPs literally 10 minutes after submit. Have you ever had websites copying your content and after that outranking you? Well this will never happened again. And last but not least it will show you if your website is being hacked. Imagine your website appearing in search results for “Erotica” when you know for sure that you do not have this keyword in your page. Well you only have to use Fetch as Google to see what Googlebot is seeing and in our imaginary case the word “Erotica” and probably other spammy terms.

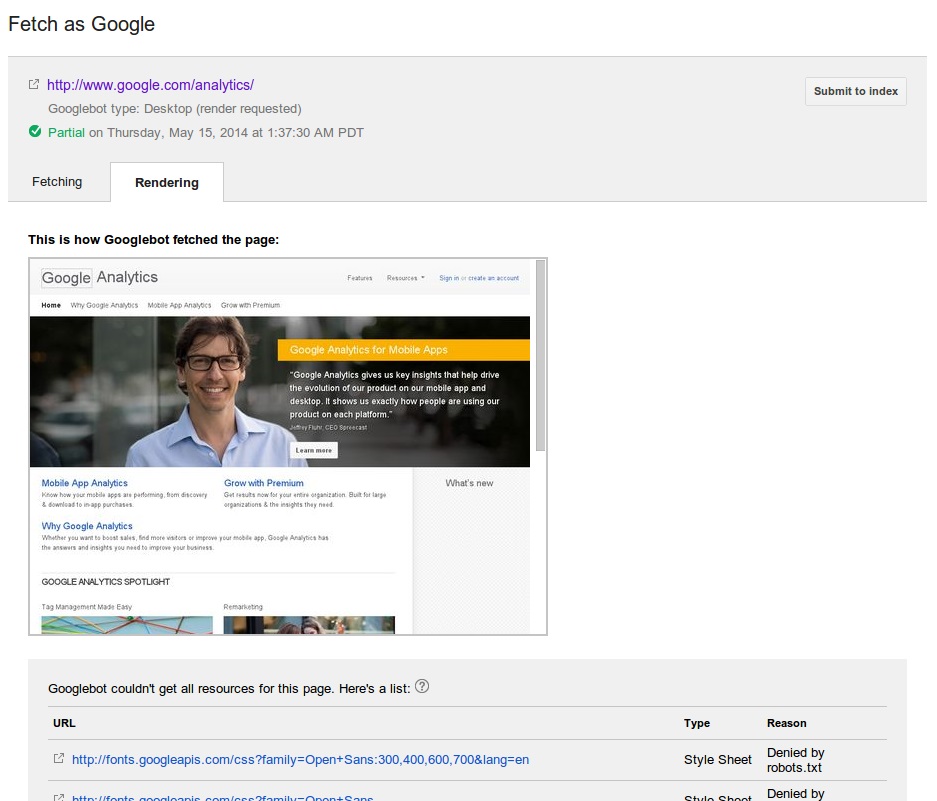

Two days ago I was pleasantly surprised to see a new button in my Crawl section of Google Webmaster Tools called Fetch and Render. Not just that but a clever drop down allowing me to choose what device I want to emulate the Fetch and Render with. Google may have taken a lot with the latest Panda update, but Google also gives. Instead of showing just a HTML code, now we’ve got a visual representation of what Googlebot actually sees. As we all know the way Googlebot renders a web page could be completely different from how modern browsers render the same page. For example this tool will show you if Googlebot is not rendering a H1 tag for your focus keyword. It will also show you if any of the resources on the page submitted (and the whole page for that matter)are being blocked from Googlebot’s crawl. I believe this tool is quite useful in identifying why a page is performing badly in search due to crawling errors. If Google can’t render a page as you intend for Googlebot to see it then obviously this gonna have a negative effect on this page ranking in search results.

In the end I’m gonna say that this new tool is something webmasters have been waiting for a long time. Finally we see a little bit of transparency from Google. And finally we can see our websites through a Googleboot’s eye.

Leave a Reply